CMPSCI

596/696: Operating Systems Implementation

CMPSCI

596/696: Operating Systems Implementation

CMPSCI

596/696: Operating Systems Implementation

CMPSCI

596/696: Operating Systems ImplementationAfter having read about VFS and BFS and browsing the source code to BFS and other filesystems, I started my own filesystem (called tgfs) based on BFS. I made it a module and started by implementing read_super(). As expected, the actual filesystem implementation was practically trivial - it was the interface with VFS that required a lot of work! Better documentation and sample code would have saved me a lot of time here. I ended up doing some guess and check work, and getting ideas from many different filesystems such as BFS, ramfs, romfs, ncpfs... Anyway, it was a good bit of work, but in the end it was pretty satisfying to be able to mount my own filesystem, ls on it, copy files back and forth, and verify their integrity with md5sum.

This lab was good practice in reading other people's code and trying to get something out of it.

I wrote the technical report for this assignment tonight. Fun stuff. To convert it to PDF format, I used the excellent free online converter here. I'm a llama so I don't use PostScript if at all possible.

Implementing the fair-share scheduler took awhile. After researching the scheduler (read its chapter in ULK) and reviewing the code, I decided how I wanted to modify the scheduler to implement fair-share. I added some instrumentation to the scheduler and modified my system call from lab 5 to retrieve the data. After writing and testing a monitoring program and CPU hogging program, I implemented my changes to the scheduler to implement fair-share. It didn't work. After a couple of tries and a closer look at how the scheduler works, I approached the problem from a new direction and designed a new algorithm. This one worked on the first try. I revised my instrumentation to count actual time spent running for each process, instead of number of times scheduled. Then I did a bunch of tests which all showed the results I was looking for. Not bad.

This was by far the most interesting and satisfying lab so far, I think. It was great to see the modified scheduler working according to plan.

I added per-process statistics and a windowing mechanism. Then I wrote a page fault generation program and a monitoring program to display page fault rates. For testing I ran the page fault generator in the background (sometimes several instances) and gave each PID to the monitoring program, which shows system-wide page fault rate along with rates for as many processes as I specify on the command line.

This lab wasn't so bad, but it was time-consuming to develop code inside the kernel. I managed to get a kernel panic once, and to have to restart UML and recompile the kernel many times. Doing a full recompile takes about 20 minutes. Changing one source file (not header) and recompiling takes about 2 or 3 minutes. UML takes about 2 minutes to start and 1 minute to shut down.

I took my system call from the previous lab and modified it to count system-wide page faults. I then made a test program that prints out the value of this counter by making the system call. Now I have to incorporate timing (the window) and per-process statistics.

Wow, adding a system call to the kernel. Who would've thought it'd be so straightforward? I used the instructions for UML here. Modify a source file and a header file, add a source file and header file, and bam ... done. Ok, so I made a few mistakes trying to implement the system call, like trying to use gettimeofday() and trying to include <time.h> and <sys/time.h>, when I really needed do_gettimeofday() and "time_user.h". Fortunately I was able to just copy and paste the compiler command line shown by make to retry compiling just my source file, instead of the whole kernel each time. Also, I wasn't sure how to use copy_to_user() at first, but a quick search revealed a man page for it.

Once I got the kernel to compile ok, the test program was short and sweet. The only quirk was figuring out how to use _syscall2, but searching the kernel source revealed one example which, when compared with the example of _syscall0, made its usage quite clear. The test program worked on the first execution, which made me happy.

Actually, this wasn't so bad. I started out by chewing up lots of time reading chapter 2 of LDD in its entirety, even though most of it was about hardware device driver concerns unrelated to a relatively simple /proc module. But it was interesting. Then I read through the sample code here and tried to make it work, but soon discovered that proc_register() is obsolete. I ended up using a combination of that sample code and the code given on the lab 3 web page, using create_proc_read_entry(). Once I got the module to build and load without insmod complaining about missing symbols, I had it warn me that my non-GNU-compliant module might taint the kernel! It still loaded, but I threw MODULE_LICENSE("GPL"); into my source file to get rid of the warning anyway. Searching on module taint kernel yielded some amusing results.

Once the module worked, the test program was easy. It was immediately obvious that gettimeofday() had much better resolution than xtime. I looked through the kernel sources to see 1) how gettimeofday() was implemented and 2) how xtime was updated. What I found made sense, and I was satisfied.

The first thing I did, after reading the lab 2 web page, was to cat a bunch of things in /proc and get a feel for it. Then I started making a list of /proc files I needed in order to write the reporting program for lab 2. "man proc" was useful here. I wrote a C program without too much effort to read the /proc files (using fopen() and fgets()), parse out the necessary info, and output it nicely. After writing myriad log analyzers and other misc. utilities in C, this was cake. Instead of using gettimeofday() to print the report date, I used my old enemy, ctime() - much easier. (At the time I didn't realize gettimeofday() would be a recurring focus in later labs, but it was ok.)

During this lab I took the opportunity to optimize a few things on my Linux PC, removing unnecessary services from startup, changing my runlevel to 3 to avoid starting X automatically, and doing remote access via VNC, SSH, and FTP. I soon realized that SSH and FTP are plenty sufficient and the machine feels a lot snappier that way...

I also spent awhile trying to get the UML-patched kernel to compile on my main PC using cygwin. No luck. This is probably pretty hopeless, even though it seems like cygwin should have everything needed. I overcame a makefile problem in "make dep" with mkdeps overflowing a max # of args, but then ran into lots of trouble with include files not being found...

From the beginning, I wanted a dedicated Linux PC. Fortunately, someone had just returned my old P2/400 to me out of the blue on the previous day. I upgraded it to 192MB of RAM, threw in a few other parts, and installed RedHat 9, which I had just downloaded at full speed (a blazing 768kbps) from the University of Kansas. After much grief with NIC/AP/switch incompatibility, and an unerasable CD-RW, I used a multisession CD-R to transfer files over to the Linux PC until I got a friend to make me a crossover cable. (Thanks!!) Then I spent awhile exploring and configuring everything in sight.

As the night wore on, I got the Linux 2.4.23 kernel source, the corresponding UML patch, and a Mandrake root filesystem copied over. After several minutes spent decompressing these things, I proceeded to compile the patched kernel, which took 23 minutes, but didn't give me any trouble. I wasn't sure if I should change any of the options that showed up when I did "make xconfig ARCH=um", but I didn't and it seems to work just fine. When the build finished I fired up linux and it failed miserably. Then I noticed I still had to do "make modules_install". After that, it started up but my two extra virtual consoles each gave an error about port-helper and died. Ping told me about this and I got a port-helper binary from him, threw it in /usr/local/bin, and it worked fine after that. I mounted the host file system and decided to call it a night.

Left: 400MHz P2, 192MB RAM, 20GB disk, RedHat 9

Right: 2.8GHz P4, 1024MB RAM, 200GB disk, WinXP

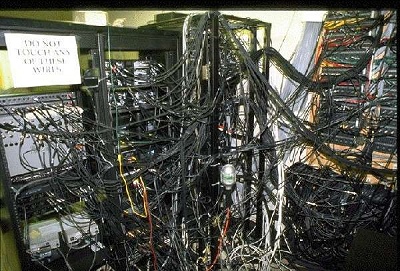

What it looks like underneath and behind my desk...